publications

2026

- CVPR

Inside-Out: Measuring Generalization in Vision Transformers Through Inner Workings2026CVPR 2026 Highlight 🏆

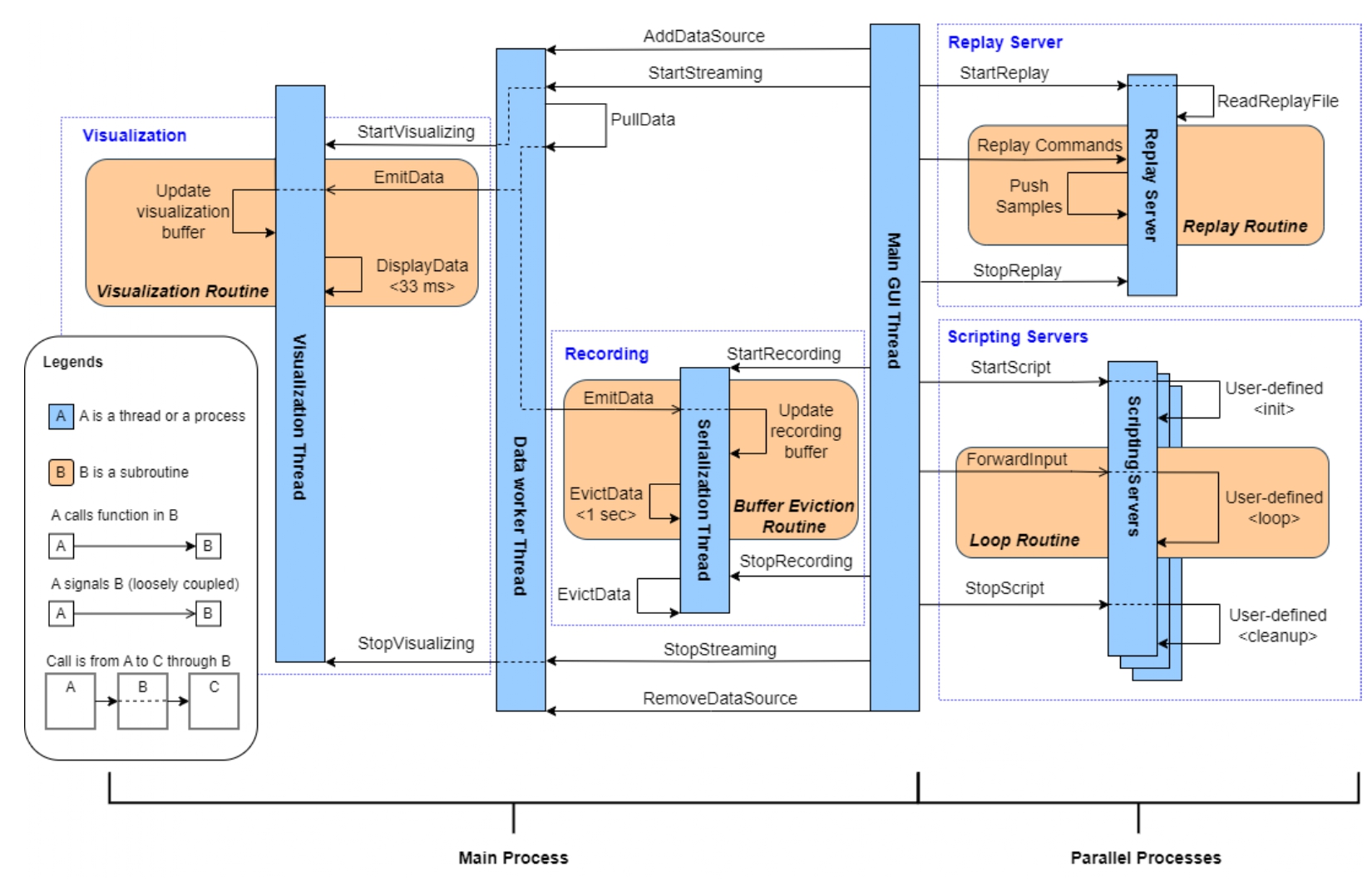

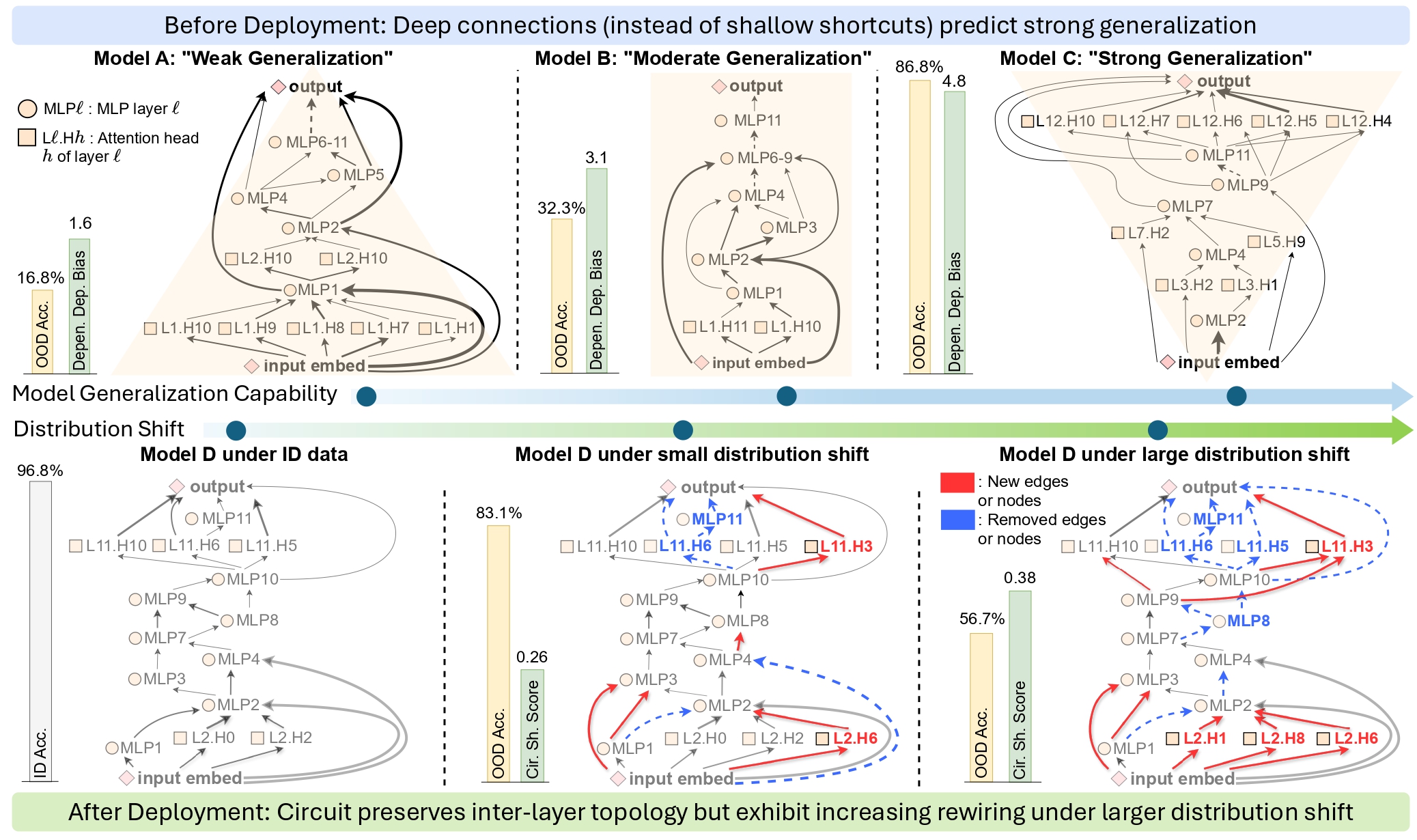

Inside-Out: Measuring Generalization in Vision Transformers Through Inner Workings2026CVPR 2026 Highlight 🏆Reliable generalization metrics are fundamental to both the development and evaluation of machine learning models. Especially in high-stakes applications where labeled target data are scarce, evaluation of models’ generalization performance under distribution shift is a pressing need. We focus on two practical scenarios: (1) Before deployment, how to select the best model for unlabeled target data? (2) After deployment, how to monitor model performance under distribution shift? The central need in both cases is a reliable, label-free proxy metric. Yet existing proxy metrics, such as model confidence or accuracy-on-the-line, are often unreliable as they only assess model outputs while ignoring the internal mechanisms that produce them. We address this limitation by introducing a new perspective: using a model’s inner working, i.e. circuits, as a predictive metric of generalization performance. Leveraging circuit discovery, we extract the causal interactions between internal representations as a circuit, from which we derive two metrics tailored to the two practical scenarios. (1) Before deployment, we introduce Dependency Depth Bias, which measures different models’ generalization capability on target data. (2) After deployment, we propose Circuit Shift Score, which predicts a model’s generalization under different distribution shifts. Across diverse tasks, both metrics demonstrate significantly improved correlation with generalization performance, outperforming existing proxies by an average of 11.0% and 45.3%, respectively.

- ECCV

Seeing Is Not Believing: Detect and Interpret Cancer Segmentation Failures2026Under review at ECCV 2026

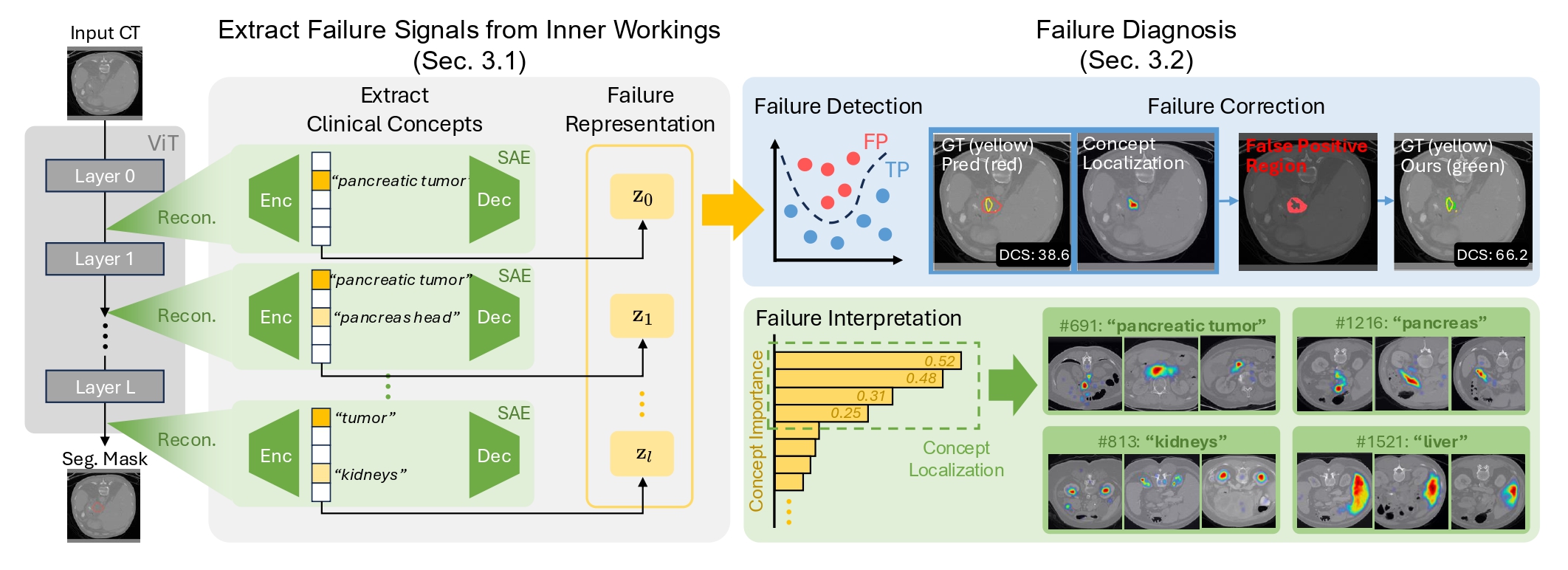

Seeing Is Not Believing: Detect and Interpret Cancer Segmentation Failures2026Under review at ECCV 2026Accurate cancer segmentation is critical for clinical oncology, yet even state-of-the-art AI systems produce clinically significant errors. Current failure detection methods face two fundamental limitations: they cannot explain why failures occur, and improving detection sensitivity degrades segmentation quality, creating an untenable clinical trade-off between performance and reliability. We propose an approach that detects failures by examining a model’s inner workings rather than its outputs. Using mechanistic interpretability tools such as Sparse Autoencoders (SAEs) to extract interpretable concepts from segmentation models, we discover that segmentation models learn clinically meaningful concepts (e.g., “tumor,” “gland margin,” “transition zone”) in their hidden layers. Crucially, true positive and false positive predictions exhibit strikingly different concept activation patterns: true positives activate significantly more concepts with stronger magnitudes, revealing richer internal reasoning. We build hierarchical concept representations across network layers and train interpretable classifiers to identify failure patterns, enabling both accurate detection and human-understandable explanations. Experiments on prostate and pancreatic cancer datasets demonstrate that our approach outperforms output-based methods in failure detection while simultaneously maintaining good segmentation quality.

- ISMRMLearning-Based Synthetic MRI Post-Processing Framework for Automated Contrast Optimization and Brain Segmentation2026ISMRM 2026

Synthetic MRI (SynMRI) enables computation of tissue relaxation maps (T1, T2, PD) that can be used to synthesize various contrast images. Conventional T1W and T2W structural MRI scans and SynMRI post-acquisition pipelines, however, rely on manually chosen contrast parameters such as TR, TE, and TI, which are tuned through expert experience and visual inspection. This manual approach may cause inconsistent contrasts across sites and is inefficient for large-scale studies. To address these challenges, we present a physics-informed, learning-based framework that automatically adjusts acquisition parameters jointly with downstream tasks. Inspired by the concept of joint optimization of data transformation, the proposed framework incorporates MRI physics as a differentiable transformation layer, enabling the simultaneous optimization of contrast parameters and downstream segmentation modules.

2025

- ICML

" Why Is There a Tumor?": Tell Me the Reason, Show Me the EvidenceMengmeng Ma, Tang Li, Yunxiang Peng, and 5 more authorsIn Forty-second International Conference on Machine Learning, 2025

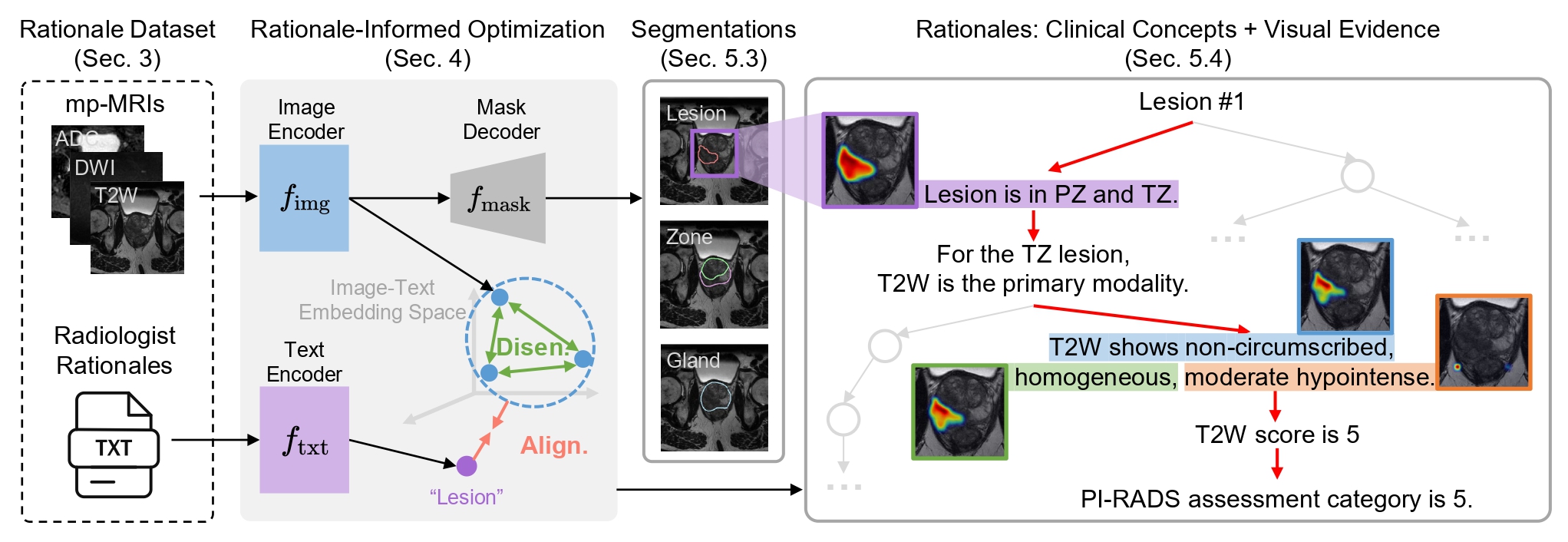

" Why Is There a Tumor?": Tell Me the Reason, Show Me the EvidenceMengmeng Ma, Tang Li, Yunxiang Peng, and 5 more authorsIn Forty-second International Conference on Machine Learning, 2025Medical AI models excel at tumor detection and segmentation. However, their latent representations often lack explicit ties to clinical semantics, producing outputs less trusted in clinical practice. Most of the existing models generate either segmentation masks/labels (localizing where without why) or textual justifications (explaining why without where), failing to ground clinical concepts in spatially localized evidence. To bridge this gap, we propose to develop models that can justify the segmentation or detection using clinically relevant terms and point to visual evidence. We address two core challenges: First, we curate a rationale dataset to tackle the lack of paired images, annotations, and textual rationales for training. The dataset includes 180K image-mask-rationale triples with quality evaluated by expert radiologists. Second, we design rationale-informed optimization that disentangles and localizes finegrained clinical concepts in a self-supervised manner without requiring pixel-level concept annotations. Experiments across medical benchmarks show our model demonstrates superior performance in segmentation, detection, and beyond.